Emotion Mirroring – Perfect Reflection of Audience & Video Content

Colin Pye

If a tree falls in a forest and no one is around to hear it, does it make a sound?" is a philosophical concept that raises questions regarding observation and perception. Is it the same with expressing emotion - if nobody's around to see you smile, are you happy?

Social scenarios and cultural forces can have a profound effect on how people express their emotions when they know they’re being observed. In Japan, for example, social etiquette somewhat stifles the expression of emotions in public situations. Then there’s the falsification of expression, as the Marquise de Martuil describes in 1988’s Dangerous Liaisons, “I learned how to look cheerful while, under the table, I stuck a fork into the back of my hand. I became a virtuoso of deceit. It wasn't pleasure I was after, it was knowledge.”

Mirror mirror on the wall.

In 2014, Realeyes created an #EmotionMirror for an exhibition at Cannes Lions. The mirror illustrated facial coding in action, matching the facial landmarks to people’s expressions. Unsurprisingly, when people took their selfies in the mirror, there was a natural urge to exaggerate a smile, a frown or surprise as it amplified the image on the mirror. When people were looking at their reflection in the #EmotionMirror, they were naturally more conscious of their own image.

Emotional mirroring and 'mirror neurons'.

When audience emotions are collected using facial coding via webcam as they watch a video, the viewer is free from any social codes of expression. Interestingly, after testing over 13,600 videos to date, we can see that in certain significant emotional scenes, that audience emotions often mirror that of the protagonist within the content itself. So if the protagonist is disgusted or happy, then the viewers tend to express these emotions back, despite there being nobody around to receive those expressions. Social norms and learned behavior over many generations may have taught us to respond to a happy scenario with a genuine smile. Neuroscientist Giacomo Rizzolatti, first identified ‘mirror neurons’, neurons that could help explain how we read people’s emotions and mimic them to express empathy.

Being able to unobtrusively tap in to this is most valuable to brands who want to ensure that their content elicits the desired response. Our tests aggregate hundreds of emotional responses per video. In these two examples we have scenes that elicit genuine emotional reactions that illustrate how audience emotions reflect those of the protagonist.

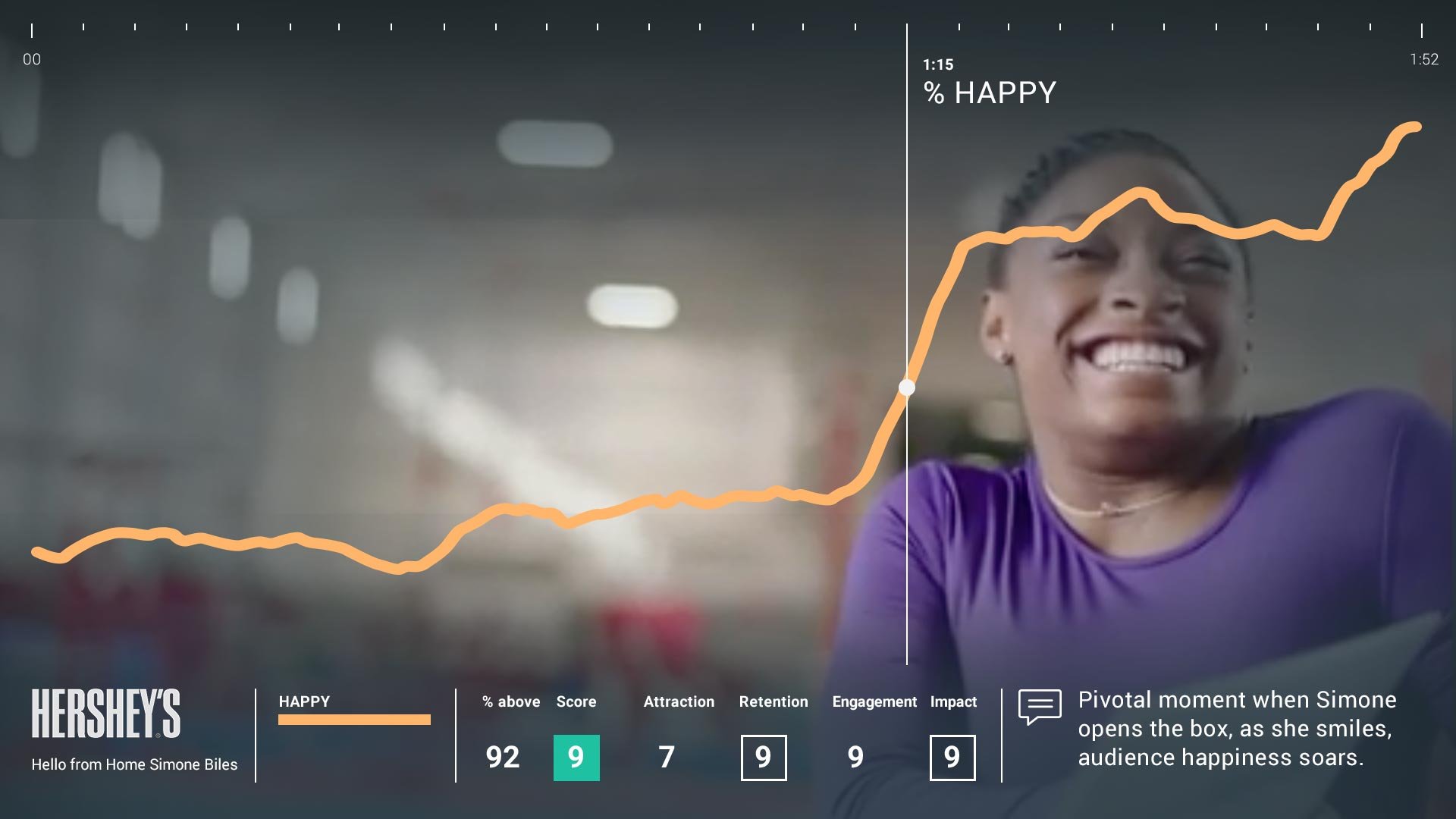

Example 1. Hershey's 'Home from Home ft Simone Biles'

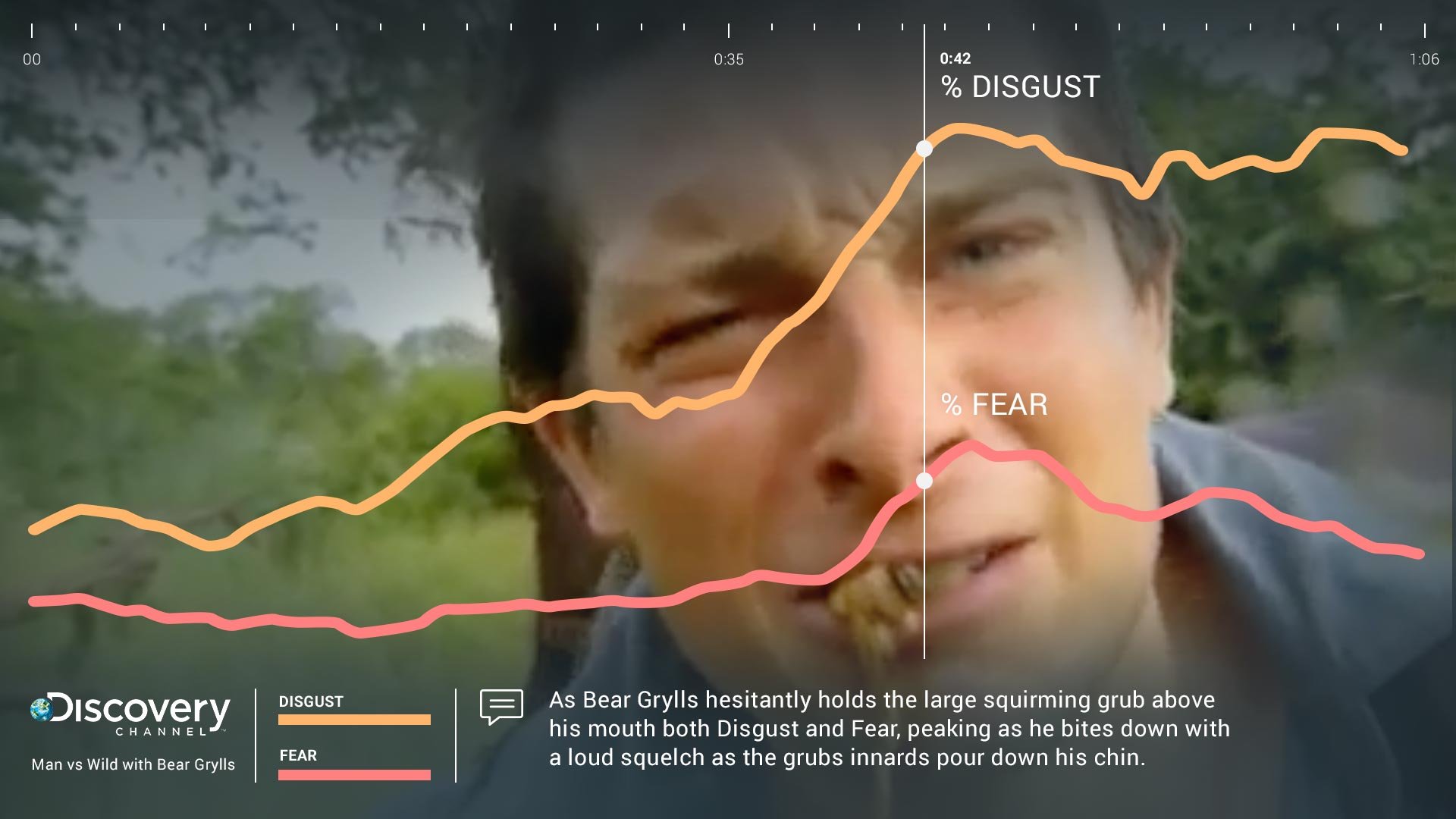

Example 2. 'Man vs Wild with Bear Grylls'

In Example 1. we have Olympian Simone Biles being stoic, practicing hard for the Rio Olympics. When she receives a box full of heartwarming wishes and Simone has a beaming smile of happiness, the viewers emotions soar. Likewise in Example 2. we have Bear Grylls chomping down on a large grub, the audience respond with both fear and disgust - peaking as he bites down and the grub's guts squirt over his chin. Nice.